This post was updated on 6 Jan 2017 to cover new versions of Docker.

It's clear from looking at the questions asked on the Docker IRC channel (#docker on Freenode), Slack and Stackoverflow that there's a lot of confusion over how volumes work in Docker. In this post, I'll try to explain how volumes work and present some best practices. Whilst this post is primarily aimed at Docker users with little to no knowledge of volumes, even experienced users are likely to learn something as there are some subtleties that many people aren't aware of.

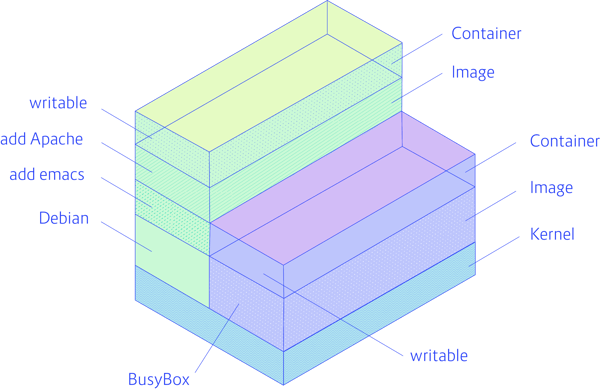

In order to understand what a Docker volume is, we first need to be clear about how the filesystem normally works in Docker. Docker images are stored as series of read-only layers. When we start a container, Docker takes the read-only image and adds a read-write layer on top. If the running container modifies an existing file, the file is copied out of the underlying read-only layer and into the top-most read-write layer where the changes are applied. The version in the read-write layer hides the underlying file, but does not destroy it -- it still exists in the underlying layer. When a Docker container is deleted, relaunching the image will start a fresh container without any of the changes made in the previously running container -- those changes are lost. Docker calls this combination of read-only layers with a read-write layer on top a Union File System.

In order to be able to save (persist) data and also to share data between containers, Docker came up with the concept of volumes. Quite simply, volumes are directories (or files) that are outside of the default Union File System and exist as normal directories and files on the host filesystem.

There are several ways to initialise volumes, with some subtle differences that are important to understand. The most direct way is declare a volume at run-time with the -v flag:

docker run -it --name vol-test -h CONTAINER -v /data debian /bin/bash

(out) root@CONTAINER:/# ls /data

(out) root@CONTAINER:/#

This will make the directory /data inside the container live outside the Union File System and directly accessible on the host. Any files that the image held inside the /data directory will be copied into the volume. We can find out where the volume lives on the host by using the docker inspect command on the host (open a new terminal and leave the previous container running if you're following along):

docker inspect -f "{{json .Mounts}}" vol-test | jq .

And you should see something like:

docker inspect -f "{{json .Mounts}}" vol-test | jq .

(out) [

(out) {

(out) "Name": "8e0b3a9d5c544b63a0bbaa788a250e6f4592d81c089f9221108379fd7e5ed017",

(out) "Source": "/var/lib/docker/volumes/8e0b3a9d5c544b63a0bbaa788a250e6f4592d81c089f9221108379fd7e5ed017/_data",

(out) "Destination": "/data",

(out) "Driver": "local",

(out) "Mode": "",

(out) "RW": true,

(out) "Propagation": ""

(out) }

(out) ]

I've used a Go template to pull out the data we're interested in and piped the output in jq for pretty printing. Now that we know the name of the volume, we can also get similar information from docker volume inspect command:

docker volume inspect 8e0b3a9d5c544b63a0bbaa788a250e6f4592d81c089f9221108379fd7e5ed017

(out) [

(out) {

(out) "Name": "8e0b3a9d5c544b63a0bbaa788a250e6f4592d81c089f9221108379fd7e5ed017",

(out) "Driver": "local",

(out) "Mountpoint": "/var/lib/docker/volumes/8e0b3a9d5c544b63a0bbaa788a250e6f4592d81c089f9221108379fd7e5ed017/_data",

(out) "Labels": null,

(out) "Scope": "local"

(out) }

(out) ]

In both cases, the output tells us that Docker has mounted /data inside the container as a directory somewhere under /var/lib/docker. Let's add a file to the directory from the host:

sudo touch /var/lib/docker/volumes/8e0b3a9d5c544b63a0bbaa788a250e6f4592d81c089f9221108379fd7e5ed017/_data/test-file

Then switch back to our container and have a look:

(out) root@CONTAINER:/# ls /data

(out) test-file

Changes are reflected immediately as the directory on the container is simply a mount of the directory on the host.

The exact same effect can be achieved by using a VOLUME instruction in a Dockerfile:

FROM debian:wheezy

VOLUME /data

We can also create volumes using the docker volume create command:

docker volume create --name my-vol

(out) my-vol

Which we can then attach to a container at run-time e.g:

docker run -d -v my-vol:/data debian

(out) 0794c8abf033192bff4df665f6c34b71f240146146c1e3654569c8c0a656c24f

This example will mount the my-vol volume at /data inside the container.

There is another major use case for volumes that can only be accomplished through the -v flag -- mounting a specific directory from the host into a container. For example:

docker run -v /home/adrian/data:/data debian ls /data

Will mount the directory /home/adrian/data on the host as /data inside the container. Any files already existing in the /home/adrian/data directory will be available inside the container. This is very useful for sharing files between the host and the container, for example mounting source code to be compiled. The host directory for a volume cannot be specified from a Dockerfile, in order to preserve portability (the host directory may not be available on all systems). When this form of the -v argument is used any files in the image under the directory are not copied into the volume. Such volumes are not "managed" by Docker as per the previous examples -- they will not appear in the output of docker volume ls and will never be deleted by the Docker daemon.

Sharing Data

To give another container access to a container's volumes, we can provide the --volumes-from argument to docker run. For example:

docker run -it -h NEWCONTAINER --volumes-from vol-test debian /bin/bash

(out) root@NEWCONTAINER:/# ls /data

(out) test-file

(out) root@NEWCONTAINER:/#

This works whether container-test is running or not. A volume will never be deleted as long as a container is linked to it. We could also have mounted the volume by giving its name to the -v flag i.e:

docker run -it -h NEWCONTAINER -v 8e0b3a9d5c544b63a0bbaa788a250e6f4592d81c089f9221108379fd7e5ed017:/my-data debian /bin/bash

(out) root@NEWCONTAINER:/# ls /my-data

(out) test-file

Note that using this syntax allows us to mount the volume to a different directory inside the container.

Data Containers

Prior to the introduction of the docker volume commands, it was common to use "data containers" for storing persistent and shared data such as databases or configuration data. This approach meant that the container essentially became a "namespace" for the data - a handle for managing it and sharing with other containers. However, in modern versions of Docker, this approach should be never be used - simply create named volumes using docker volume create --name instead.

Permissions and Ownership

Often you will need to set the permissions and ownership on a volume, or initialise the volume with some default data or configuration files. A key point to be aware of here is that anything after the VOLUME instruction in a Dockerfile will not be able to make changes to that volume e.g:

FROM debian:wheezy

RUN useradd foo

VOLUME /data

RUN touch /data/x

RUN chown -R foo:foo /data

Will not work as expected. We want the touch command to run in the image's filesystem but it is actually running in the volume of a temporary container. The following will work:

FROM debian:wheezy

RUN useradd foo

RUN mkdir /data && touch /data/x

RUN chown -R foo:foo /data

VOLUME /data

Docker is clever enough to copy any files that exist in the image under the volume mount into the volume and set the ownership correctly. This won't happen if you specify a host directory for the volume (so that host files aren't accidentally overwritten).

If you can't set permissions and ownership in a RUN command, you will have to do so using a CMD or ENTRYPOINT script that runs when the container is started.

{{cta('9839e7cc-ea5c-4850-8970-2d788b436e42')}}

Deleting Volumes

Chances are, if you've been using docker rm to delete your containers, you probably have lots of orphan volumes lying about taking up space.

Volumes are only automatically deleted if the parent container is removed with the docker rm -v command (the -v is essential) or the --rm flag was provided to docker run. Even then, a volume will only be deleted if no other container links to it. Volumes linked to user specified host directories are never deleted by docker.

To have a look at the volumes in your system use docker volume ls:

docker volume ls

(out) DRIVER VOLUME NAME

(out) local 8e0b3a9d5c544b63a0bbaa788a250e6f4592d81c089f9221108379fd7e5ed017

(out) local my-vol

To delete all volumes not in use, try:

docker volume rm $(docker volume ls -q)

Conclusion

There is quite a lot to volumes, but once you've understand the underlying philosophy it should be fairly intuitive. Using volumes effectively is essential to an efficient Docker workflow, so it's worth playing around with the commands until you understand how they work.

Previous article

Previous article