There are a lot of cases where developers are using Windows. Often times it is used as a development platform, either simply through developer preference, or due to company policy or a tooling dependency. As more frameworks and services become available on the Linux platform, such as Microsoft’s .NET Core, Linux becomes more attractive as a target environment for running and testing projects—especially with the abundance of tooling and applications available on the Linux platform itself.

![]()

Therefore, a hybrid approach may be needed, and this means running Linux inside a VM with the help of various virtualisation solutions. However, this often comes with a significant configuration and performance overhead.

This blog post is about a possible solution where we can work with both worlds in a way that’s simple and easy to manage. And, possibly, it will be the way to solve this problem in the future altogether.

The Limits of Docker Desktop

I like to use Windows myself, but I develop software that runs primarily on Linux kernels. The obvious answer is to use Docker, a popular tool to create, deploy, and run containers, which package applications.

Even if you are only running Windows, you can run Docker containers with Docker Desktop, which uses Hyper-V as a virtualisation layer. If your Docker Desktop is in ‘ Linux-Mode’, you can run whole distributions with that approach very quickly and simply on Windows.

This solution has been widely used for a while now. But it doesn’t address that a lot of tooling, scripts, and repositories work only with Linux in mind—especially in a distributed team where some developers may use Linux and some use Windows machines.

Developing on Windows and targeting Linux is often not really seamless, as scripts, tooling, and diagnostics need to run on a Linux host in order to interact with the application, a Docker container, or a Kubernetes cluster. While Docker Desktop offers these in some form with Docker-in-Docker or Multi-Container setups, the user can quickly lose control, and has to maintain a ‘service container’, which includes the necessary tooling (for example, ‘kubectl’ with autocompletion and extensions, which are only available on Linux). Also the Hyper-V virtualisation layer adds complexity in many areas, especially regarding topics like Volume-Mounts or networking.

So a ‘native’ Linux Distribution, running alongside Windows, with little to no VM setup and performance overhead, might be a possible solution to bridge the gap between the two. This is where we get to Windows Subsystem for Linux, or WSL.

WSL2

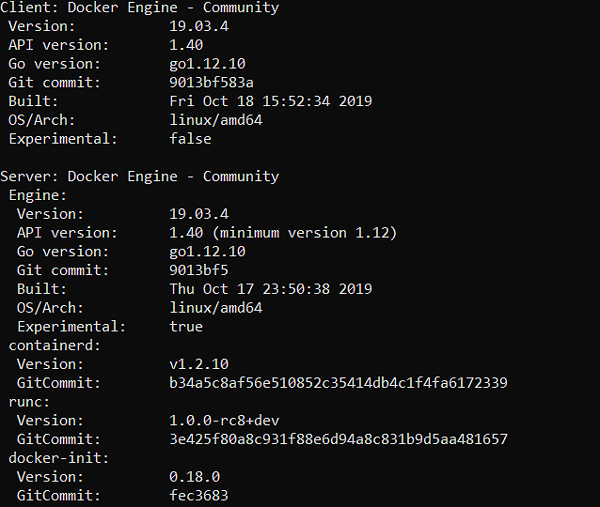

There are two versions of WSL available. While version 1 works with running a compatibility layer to interact with the Windows kernel, WSL2 uses a lightweight VM and therefore a Linux kernel itself. This enables better system-call compatibility and therefore can be adopted for a wider range of use-cases.

For example, WSL1 is not able to run a Docker daemon, while WSL2 can. WSL2 uses its own, very lightweight virtualisation layer to run the Linux kernel. Which means the kernel itself is managed by Windows Update.

You can however, choose your own Linux distribution to install. In the example explained in this post, we’ll be using Debian.

As of now, WSL2 is not part of an official Windows release and probably won’t be in this year’s November 1909 release. Therefore we can expect this feature to be generally available in 2020. The current functionality could contain bugs and may even crash your system (never did for me, but experience may vary). If you still want to try it out, you can obtain WSL2 by opting in to the Windows Insider Programme.

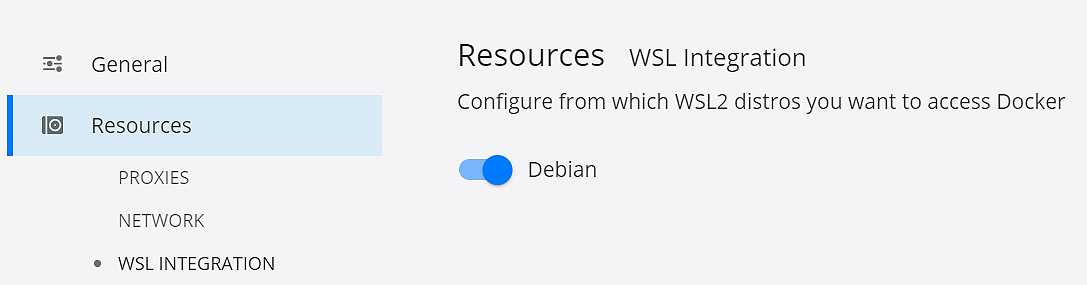

Docker Desktop (Edge)

So let’s get this up and running. Install Docker into it and even fire some kubectl commands, all in Linux, on Windows. First, make sure your Windows version is greater than 18917, then you can set WSL to use WSL2 with the PowerShell command ‘wsl --set-version Debian 2’. Other distributions such as Ubuntu, openSUSE, Kali Linux, etc., are available. The conversion may take a couple minutes.

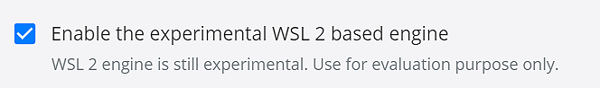

We also need Docker Desktop from the Edge channel (Build greater than 40323). Once we have Docker Desktop installed, we can enable the experimental WSL2 engine:

We also need to specify from which distribution we want to interact with the Docker daemon. This will list all distributions we have installed. Meaning, in this case, I have only Debian. If I’d have Ubuntu installed, it would show up as well. Enabling the setting will it make possible for a Docker-Client to talk to Daemon inside a special WSL2 distribution (more on that later):

As of now, the Docker-CLI will not be installed inside Debian. So we can do it simply ourselves:

> sudo apt-get update

> sudo apt-get install docker-ce-cli

Simply follow the official Docker installation instructions. You don’t need the daemon or containerd, they are already contained in the special distributions providing these functionalities. So we only need to care about clients.

Resulting in this Docker version output:

In the background, a couple things happened. Checking our Hyper-V Status we realise the VM isn’t actually running. That is because WSL2 uses its own virtualisation technology and the Docker-CLIs on Windows and Debian speak to the daemon inside a special WSL2 distribution that Docker Desktop installed for us.

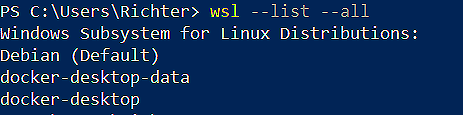

When we list our WSL distributions in Powershell, we get something interesting:

Debian was expected, as I have installed it via the Microsoft Store. But Docker Desktop set us up two more distributions, which enable us to use Docker the new way.

They work using LinuxKit, running and isolating the daemon, enabling networking and volume mounting, managing the images, and much more. This is basically the same solution that has been used for the Hyper-V and Mac solution, but doesn’t leverage Hyper-V or Samba-Share components to interact with the host system. With this change, Docker and Microsoft don’t have to reimplement pre-existing features in a separate channel. As these distributions run in the background, we don’t really need to interact with them at all.

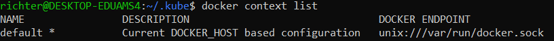

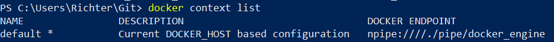

One note on Docker context, though: Both the Linux and Windows Docker-Clients find the same daemon inside the docker-desktop distribution via Docker Context. Docker Desktop should configure both clients automatically with this context.

Debian:

Windows:

Local Kubernetes

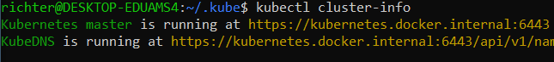

Now that we have Docker running, we can test if Kubernetes works. We don’t have to use Minikube, k3s, or KIND. You can enable Kubernetes directly with Docker Desktop, which will run a local single node cluster.

Docker Desktop will write the correct kubeconfig to C:\Users\<username>\.kube. You may have to configure your Linux-kubectl to that kubeconfig as well.

After doing this, we can use kubectl on Windows and Linux, talking to the same local cluster. I prefer to use the Linux-kubectl, because among the many reasons I can get autocompletion, and many nifty tools like KNS.

Ta-da!

So, let’s run a workload:

For this demo I’m running the microservices demo, using option three to get it up and running quickly. This demo runs multiple pods and services in order to serve a webshop.

Running ‘kubectl apply -f ./release/kubernetes-manifests.yaml’ will run the whole setup in one command.

After a little waiting, we can access our webshop on localhost on Linux and Windows.

Note that the LoadBalancer service definition actually uses localhost as an external-ip. So we don’t have to use port-forwarding

Takeaways

So we got the current Docker Linux version running on Windows without VirtualBox or HyperV, and showed off basic Kubernetes functionality without any third-party solutions. We have a local setup to play around, switch between environments, and try things out. Certainly a fast way to learn Linux, Docker and Kubernetes—all the while staying in the familiar Windows environment.

With the development of WSL2, and the integration with the Docker on the Edge channel, we can see a trend of Linux tooling and apps being more natively integrated into Windows, enabling a lot of people to work seamlessly with these environments without sacrificing their OS, or using heavy virtualisation techniques. With huge potential for all people on both Windows and Linux, collaboration and inclusion can only be a good thing for developing, deploying, running, and testing our apps.

As WSL2 is expected to go into general availability next year, we can expect to see more tooling, features, and improvements, closing the gap between development on Windows, and running workloads and tooling on Linux.

You can already interact with WSL2 in VSCode through this plugin, which enables you to edit files inside WSL2, as well as the ability to run and debug Linux apps.

With this big architectural change, we get closer and closer to a native Linux experience for Windows machines. All the while, it enables us to use both worlds, side-by-side, seamlessly and with good performance at the same time. Something we can definitely look forward to.

Previous article

Previous article