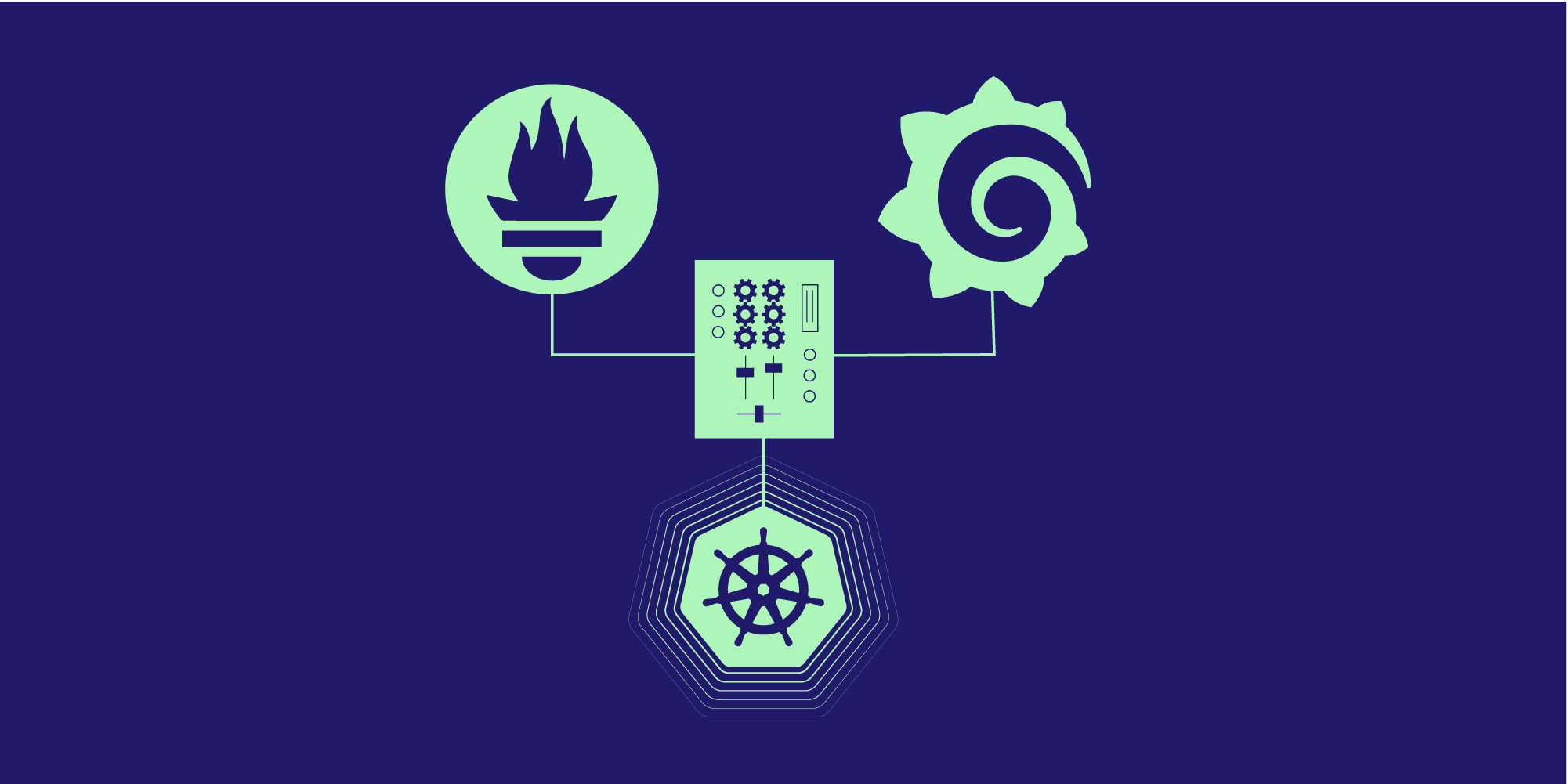

The term Kubernetes monitoring mixins appears in many Kubernetes related projects involving Prometheus and Grafana. Despite its broad usage, the details about it cannot be easily found. There are many cases where mixins are used without notably mentioning them (for example in prometheus-operator). In this article, we are going to explore what it is and also how you can use it in your Kubernetes cluster.

Specifically, we are going to define what Kubernetes monitoring mixins are, what problem they try to address and how you can use them. Furthermore, by developing an example showcase accompanied with a detailed explanation, this should help the reader understand the presented concepts.

The definition of the term should make clear what the community means by its reference. It will also explain the roots of the term and what it includes. Since it is essentially a collection of actions and tools, it is important to specify both of them.

By investigating the problem faced before it was used, you will be able to understand the motivation behind its conception. This will also reveal why specific tools are being used when it comes to working with mixins. Since the problem itself lies in other areas of Cloud Native applications, by understanding the considerations for this specific case, you will be able to utilise similar approaches in relevant issues.

In this article we will create a .jsonnet file and an accompanying Docker image to interact with the kubernetes mixin libraries.

Definition

The precise definition of the term monitoring mixin can be found in https://monitoring.mixins.dev/:

A mixin is a set of Grafana dashboards and Prometheus rules and alerts, packaged together in a reusable and extensible bundle. Mixins are written in jsonnet, and are typically installed and updated with jsonnet-bundler.

Since the above definition has a lot of terms, we need to decompose it in order to deeply understand what it means. A resource that can help us with that is the Prometheus Monitoring Mixins Design Document where more details are mentioned. As per this document:

A monitoring mixin is a package of configuration containing Prometheus alerts, Prometheus recording rules and Grafana dashboards. Mixins will be maintained in version-controlled repos (eg: Git) as a set of files. Versioning of mixins will be provided by the version control system; mixins themselves should not contain multiple versions.

Mixins are intended just for the combination of Prometheus and Grafana, and no other monitoring or visualisation systems. Mixins are intended to be opinionated about the choice of monitoring technology.

Mixins should not however be opinionated about how this configuration should be deployed; they should not contain manifests for deploying Prometheus and Grafana on Kubernetes, for instance. Multiple, separate projects can and should exist to help deploy mixins; we will provide examples of how to do this on Kubernetes, and a tool for integrating with traditional config management systems.

The above means that by using the term monitoring mixin we refer to a package of configuration files for Prometheus Recording Rules, Prometheus Alerting Rules and Grafana Dashboards that are produced by using jsonnet. It is very specific about the tools it is meant to configure (only Prometheus for monitoring and Grafana for visualization) but also about their sub-components (for Prometheus only Rules and Alerts and for Grafana only Dashboards).

The problem

Domain

The problem domain is related to the monitoring and alerting in a Kubernetes cluster. Given that you have already installed Prometheus to gather metrics and Grafana to view them, there is no easy way to create rules, alerts and dashboards. The problem includes the creation, modification, versioning and distribution of the configuration files for Prometheus Recording Rules, Prometheus Alerting Rules and Grafana Dashboards.

Another aspect of the problem is how to apply the configuration files. Since Prometheus and Grafana can be installed using various methods, their configuration is not a trivial task. For instance, Prometheus can be installed via custom YAML files, using Helm package manager or via Prometheus Operator. Each option comes with its trade-offs regarding the installation and configuration of the Prometheus components. The monitoring mixin specifies which installation method it supports.

Configuration complexity for Prometheus and Grafana

As described in the article Everything You Need to Know About Monitoring Mixins, despite the existing ready-made resources for each of the components, it is still complex to wire them together. Each component is designed to perform a specific task. To do so in a variety of environments (Virtual Machines, Docker containers, Kubernetes pods etc.) the designers have introduced a number of configuration options. Since each of the components is highly configurable, it becomes challenging to create a bundle of them that will work in an existing Kubernetes cluster.

Moreover, their core functionality in a dynamic Kubernetes cluster makes it imperative to be easily configurable. In clusters where services and components are added regularly, the monitoring and alerting systems need to be extended and modified regularly too. This process is especially intense in cases of refactoring where new resources are added or old ones modified and even revoked.

Another aspect of the problem comes from the limitation of how Kubernetes manifests are applied in a cluster. Specifically, the accepted formats are YAML and JSON. Both of these formats are static and indented to store data. They are not templates, so you don’t have the option to use variables, conditional statements or loops. This is limiting when you try to generalise a set of Prometheus configurations and Grafana dashboards since each administrator or developer will need to edit those .json or .yaml files in order to work in their environment. Also, if those changes are to be done manually, it becomes a tedious and error-prone procedure.

The above shows the need for a way to package Prometheus, Grafana and Alertmanager configuration files in distributable and configurable bundles. This should be done taking into account the inherent limitations of the .json and .yaml manifest files, which are the end-resources that the Kubernetes cluster really cares about.

A proven solution—Kubernetes monitoring mixins

How the Kubernetes monitor mixins solve the problem

The Kubernetes monitor mixins solves the described problem by adopting a series of practices and tools. These aim to create reusable, configurable and extensible packages that are easily installable by non-monitoring experts. Also, these packages are able to be stored alongside the installation manifests of the Prometheus and Grafana in the version control system.

The first step towards the solution is defining the components that should be already present on the system and also restricting the configuration aspects that the solution is responsible for. Prometheus and Grafana need to be already installed on the system. Also, the mixin itself will create configuration files only for the Prometheus Recording Rules, Prometheus Alerting Rules and Grafana Dashboards.

To achieve reusability, configurability and extensibility, the monitor mixins leverage jsonnet. Specifically, they provide jsonnet libraries (with extension .libsonnet) for the components they are responsible for. Jsonnet is a data templating language that produces the needed .json manifest files. It provides the required templating capabilities, variables, conditional statements, functions and loops. This means that using the provided libraries we are able to customise the configuration files according to the needs of our existing setup.

The monitor mixins allow for easy distribution and versioning since they can be found in code repositories. They require minimum input that is related to the customization required by the existing Kubernetes cluster setup. That input is provided again in the form of a jsonnet file. The latter means that we can version control our jsonnet files along with the rest of the manifest files.

The above sum up to a set of jsonnet libraries with a jsonnet interface that allows their usage. Upon instructing the libraries to produce configuration according to the existing cluster setup (using the jsonnet interface) the expected result is a set of manifests files ready to be applied to the cluster.

How the specific kubernetes-mixin is structured

As mentioned in the Introduction, the use case of the kubernetes-mixin will be analysed. As can be seen by its repository, it has a directory named after each of the three components that are going to be configured:

- alerts for the Prometheus Alerts

- rules for the Prometheus Rules

- dashboards for the Grafana Dashboards

Each directory contains the relevant jsonnet libraries.

The repository also provides information about the versioning of the underlying jsonnet libraries and instructions on how to produce and use the manifest files that are expected as an outcome from those libraries. The production of the manifest files has been reduced to just calling the given jsonnet libraries with just some configuration. As can be seen, the instructions on how to apply the manifest files heavily depend on the way that Prometheus and Grafana have been set up.

An overview/explanation of how Jsonnet is involved in the kubernetes-mixin

Whilst the detailed explanation of how jsonnet works is outside of the scope of the current article, it is useful to see how jsonnet is being utilised in order to provide the required flexibility to the kubernetes-mixin use case.

The directory’s structure discussed in the previous section allows for easy inspection of the included libraries. Each of the alerts, rules and dashboards directories have a set of .libsonnet files. These files are the jsonnet libraries. The lib directory works as an entry point since it combines the aforementioned libraries. It has a set of .libsonnet and .jsonnet files that are actually including the library files.

The configuration of the different components is done using the file named config.libsonnet in the root directory. There you may see the basic configuration that will be used by the libraries to produce the manifest files. This configuration can be modified and extended by your own jsonnet file.

Example

We are going to use the kubernetes-mixin. As mentioned in the description of the repository, it is "A set of Grafana dashboards and Prometheus alerts for Kubernetes".

Prerequisites

Since we want to see the monitoring mixin in action, we need a Kubernetes cluster. One easy way to set up such a cluster on your local machine is by using Kind. It is lightweight, fast and recommended for local development. To install Kind on your local machine just follow the instructions here. Then, you may start your local cluster by using these commands.

To interact with your cluster will also need kubectl, which will let you inspect and create resources on your local Kind Kubernetes cluster. Since we are going to install a number of components, you need to have kubectl in place. You may expect kubectl to automatically be able to communicate with your Kind cluster since they both use the default paths for placing the needed configuration files.

Another thing to note is that the example commands refer to a Linux host machine. They will be simple and they have their respective alternatives in the other host environments too.

Work with kubernetes-mixin jsonnet

The goal is to create a .jsonnet file with instructions on how to interact with the libraries in kubernetes-mixin. Start by creating the following directory structure:

~/k8s-mixin-example ├── jsonnet └── manifests

The directory jsonnet will host your .jsonnet file with instructions on how to create the Prometheus and Grafana configuration files. The configuration files will be stored later on inside the manifests directory.

Create a file jsonnet/mixin-simple.jsonnet with the following content:

local utils = import "../vendor/kubernetes-mixin/lib/utils.libsonnet";

local conf = (import "../vendor/kubernetes-mixin/mixin.libsonnet")

+

{

_config+:: {

kubeStateMetricsSelector: 'job="kube-state-metrics"',

cadvisorSelector: 'job="kubernetes-cadvisor"',

nodeExporterSelector: 'job="kubernetes-node-exporter"',

kubeletSelector: 'job="kubernetes-kubelet"',

grafanaK8s+:: {

dashboardNamePrefix: 'Mixin / ',

dashboardTags: ['kubernetes', 'infrastucture'],

},

prometheusAlerts+:: {

groups: ['kubernetes-resources'],

}

},

}

+ {

prometheusAlerts+:: {

/* From Alerts select only the 'kubernetes-system':

https://github.com/kubernetes-monitoring/kubernetes-mixin/blob/master/alerts/system_alerts.libsonnet#L9

*/

groups:

std.filter(

function(alertGroup)

alertGroup.name == 'kubernetes-system'

, super.groups

)

}

}

+ {

prometheusRules+:: {

/* From Rules select only the 'k8s.rules':

https://github.com/kubernetes-monitoring/kubernetes-mixin/blob/master/rules/apps.libsonnet#L10

*/

groups:

std.filter(

function(alertGroup)

alertGroup.name == 'k8s.rules'

, super.groups

)

}

}

+ {

grafanaDashboards+:: {}

}

;

{ ['prometheus-alerts']: conf.prometheusAlerts }

+

{ ['prometheus-rules']: conf.prometheusRules }

+

{ ['grafana-dashboard']: conf.grafanaDashboards['kubelet.json'], }

The above jsonnet commands will configure the components. You can see that they refer to:

- general configuration parameters with "_config"

prometheusAlertsconfiguration. Specifically it will just select thekubernetes-systemalertsprometheusRulesconfiguration. Specifically it will just select thek8s.rulesgrafanaDashboards, where it selects just thekubelet.jsonones

While the official kubernetes-mixin has a set of commands that will produce all the Prometheus Alerts, Rules and Grafana Dashboards, the jsonnet/mixin-simple.jsonnet file will actually make use of the underlying libraries in order to produce just a subset of them. It shows how you may leverage the jsonnet libraries from the mixin to produce only what you need. In our case what we need is a single prometheusAlert, prometheusRule and a grafanaDashboard.

Create and use a Docker image that will produce configuration

To minimise the tools that need installation, you can pack some of them in a Docker image. This image will spin up a container that will install the jsonnet binaries and download the needed libraries. Then you can just mount your configuration and it will produce the Prometheus alerts and Grafana dashboards.

The Dockerfile that will produce the Docker image has the following contents:

FROM golang:1.16-alpine3.14 AS golang

FROM alpine:3.14 AS runtime

# Download binaries

FROM golang AS builder

RUN apk add git \

&& GO111MODULE=on go get -u github.com/google/go-jsonnet/cmd/jsonnet@v0.16.0 \

&& go get github.com/jsonnet-bundler/jsonnet-bundler/cmd/jb@v0.4.0 \

&& GO111MODULE=on go get github.com/mikefarah/yq/v3@3.3.2

# Build our final image

FROM runtime

COPY --from=builder /go/bin/jsonnet /usr/local/bin/jsonnet

COPY --from=builder /go/bin/jb /usr/local/bin/jb

COPY --from=builder /go/bin/yq /usr/local/bin/yq

RUN apk add bash make git

WORKDIR /app

RUN jb init && jb install github.com/kubernetes-monitoring/kubernetes-mixin

CMD jsonnet -J vendor jsonnet/mixin-simple.jsonnet -m . > json-files-list && \

cat json-files-list | grep -v grafana | xargs -i{} /bin/sh -c 'cat {} | yq r -P - > manifests/{}.yaml && rm {}' && \

cat json-files-list | grep grafana | xargs -i{} /bin/sh -c 'cat {} > manifests/{}.json && rm {}'

In the above multistage Dockerfile the build steps are:

1. Using a golang alpine base downloads jsonnet, jb and yq and build their binaries

2. Copy the required jsonnet and yq binaries to the image that the container will use

3. Define a command to run by default

The command is complex and may require some further explanation. It comprises three chained commands that are associated with the operator &&. The first part jsonnet -J vendor jsonnet/mixin-simple.jsonnet -m . > json-files-list means that jsonnet will execute the jsonnet/mixin-simple.jsonnet and the resulting filenames will be saved into the file json-files-list. The second command reads the list of the files inside json-files-list and filters out the ones not containing the term “grafana”. Then it converts those .json files to .yaml files and moves them under manifests directory. The third command reads the list of the files inside json-files-list, points out the ones containing the term “grafana” and moves it under the directory manifests.

The conversion of the files from .json to .yaml format (except from the grafana one) is done in order to comply with the format expected from the Prometheus and Grafana that will be the consumers of those files.

To create the Docker image you can use the following command:

docker build -t manifests-factory:v0.1.0 -f Dockerfile .This will create thete th image named manifests-factory:v0.1.0 that you will use to spin up the container that will use the file jsonnet/mixin-simple.jsonnet to produce the manifest files.

Next, use the docker image you just created via the following command:

docker run --rm -ti -v ~/k8s-mixin-example/jsonnet:/app/jsonnet -v ~/k8s-mixin-example/manifests:/app/manifests manifests-factory:v0.1.0The docker container has mounted two directories as volumes:

- ~/k8s-mixin-example/jsonnet where the

jsonnet/mixin-simple.jsonnetexists (input) - ~/k8s-mixin-example/manifests where the produced files will be placed (output)

By running the above command the expected result is three files:

~/k8s-mixin-example/manifests ├── grafana-dashboard.json ├── prometheus-alerts.yaml └── prometheus-rules.yaml

Install and configure Prometheus and Grafana

As mentioned in the previous sections, Prometheus and Grafana should be already installed. Also, the kubernetes-mixin refers to the following options regarding how to use the configuration files you produced in the previous step.

You then have three options for deploying your dashboards

1. Generate the config files and deploy them yourself

2. Use ksonnet to deploy this mixin along with Prometheus and Grafana

3. Use prometheus-operator to deploy this mixin (TODO)

In this example, the focus is on option 1 which is more generic.

You need a way of installing Prometheus and Grafana and also instruct them to use the alerts, rules and dashboards you created on the previous steps. This installation also has to be compatible with the limitations mentioned above.

Installation instructions of Prometheus and Grafana are out of the scope of this example, so you may use the ingress-nginx repository that provides the manifests that you may use to get them in place. This means that you need to clone the repository:

[~/k8s-mixin-example]: git clone https://github.com/kubernetes/ingress-nginx.git [~/k8s-mixin-example]: tree -L 1 . ├── ingress-nginx ├── jsonnet └── manifests

Now you should have all the manifest files that will be needed, so it is time to install and configure Prometheus and Grafana on your running Kind cluster.

Prometheus

Start by creating the file manifests/prometheus-config.yaml with the following content:

global:

scrape_interval: 10s

rule_files:

- rules.yaml

- alerts.yaml

scrape_configs:

- job_name: 'ingress-nginx-endpoints'

kubernetes_sd_configs:

- role: pod

namespaces:

names:

- ingress-nginx

relabel_configs:

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

- source_labels: [__meta_kubernetes_service_name]

regex: prometheus-server

action: drop

In this configuration you may notice the rule_files. These will contain the manifests/prometheus-rules.yaml and manifests/prometheus-alerts.yaml.

In order for Prometheus to use your configuration files, they need to be mounted via a ConfigMap. The steps are:

[~/k8s-mixin-example]: kubectl create ns ingress-nginx

[~/k8s-mixin-example]: kubectl apply -k ingress-nginx/deploy/prometheus

[~/k8s-mixin-example]: kubectl create -n ingress-nginx configmap $(kubectl get configmaps -n ingress-nginx | grep prometheus | awk '{print $1}') --from-file=prometheus.yaml=manifests/prometheus-config.yaml --from-file=rules.yaml=manifests/prometheus-rules.yaml --from-file=alerts.yaml=manifests/prometheus-alerts.yaml --dry-run=client -o yaml | kubectl apply -f -

[~/k8s-mixin-example]: kubectl delete pod -n ingress-nginx $(kubectl get pods -n ingress-nginx | grep prometheus-server | awk '{print $1}')

The steps will create the namespace ingress-nginx, apply the manifests files that will create the needed Prometheus resources, update the configuration for Prometheus to take into account the alerts and rules that you have created and lastly delete the prometheus-server pod. The deletion will actually re-create the pod that will now use the correct configuration.

You can verify that the alerts and rules are in place by visiting the Prometheus UI. To do so, first port forward its service:

kubectl port-forward -n ingress-nginx svc/prometheus-server 9090:9090

and then with you browser visit the URLs:

Grafana

The case for Grafana is simpler since it involves using the web interface. To do so, you will need to first deploy Grafana via:

[~/k8s-mixin-example]: kubectl apply -k ingress-nginx/deploy/grafana

After the grafana pod is Ready you can expose the grafana service:

[~/k8s-mixin-example]: kubectl port-forward -n ingress-nginx svc/grafana 3000:3000

With your browser go to http://localhost:3000 and use “admin” as username and password. Then navigate to Create > Import and click the button Upload JSON file. There you may select your ~/k8s-mixin-example/manifests/grafana-dashboard.json file which will create the dashboards under General/Mixin/Kubelet. As a data source the URL http://prometheus-server:9090 can be used.

Conclusion

In this article, we explored the Kubernetes monitor mixins. We showed that they are basically a way of building Prometheus Alerts, Rules and Grafana Dashboards by using jsonnet libraries. The latter solves the problem of distributing the code and also dealing with the high level of customization needed in the required manifest files.

The provided example shows how you can use a mixin. Specifically, how you may configure it, produce the manifest files and use them in your local Kubernetes cluster. With instructions on how to use the needed tools and how they interact, it is intended to be a detailed example that you may use to develop more complex configurations.

Whilst our main focus was on a specific project, the idea of developing jsonnet libraries to produce configuration can be used in other aspects too in the Kubernetes ecosystem. Jsonnet can be leveraged in order to modify JSON templates by injecting new blocks, removing and adding properties etc. This means fewer manual and error-prone tasks when it comes to producing the manifest files. At the time of writing of this article jsonnet libraries are being offered by a number of tools in order to customise them. An outstanding case is the kube-prometheus that offers a jsonnet interface.

Keep an eye out for a jsonnet deep dive article in the near future where more advanced concepts regarding its usage will be presented.

Previous article

Previous article