GitOps—the idea to fully manage applications and infrastructure using a Git-based workflow—is gaining a lot of traction recently. Nothing shows it better than a new generation of deployment tools, which treat GitOps as the main organising principle for Continuous Delivery and Change Management.

The tools we are going to examine in this post are FluxCD, ArgoCD and JenkinsX, all of them focused on deployment of applications to Kubernetes clusters.

There is much confusion in the industry right now about which tool to pick and how they relate to generic CI/CD tools like Jenkins, GitLab CI, or GitHub Actions. While these other tools do a good job implementing generic pipelines and workflows to build, test, release, and deploy applications, for this evaluation we selected the three tools that implement a GitOps style of application deployments out-of-the-box, based on changes in Git repositories.

TL;DR

If you already know what GitOps is and don't want to read a long description of each tool, you can jump to the end and just read the Conclusions section!

A Quick Intro to GitOps

The term was coined by Alexis Richardson at Weaveworks in a blog post titled ‘Operations by Pull Request’. The basic idea is to manage resources on Kubernetes solely by committing changes to Git and approving using pull requests.

If that sounds a bit vague, let’s define these four rules of GitOps to make it more grounded:

- Store all Kubernetes resource configuration in Git.

- Use only pull requests to modify resources on that Git repo.

- Once Git is modified, apply changes to the cluster immediately and fully automated.

- If the actual state drifts from the desired state, either correct it or alert operators about it.

When we combine these, we get a system where all changes to an application environment are logged, and we can apply our Git-based tooling to manage the history. We can also have approval processes by using pull requests.

Architecturally, GitOps will allow us to separate the Continuous Integration (CI) flow of an application from the deployment process, as the deployment process will kick off based on changes to a GitOps repo, not as part of the CI process. (Here’s some more on GitOps and CI/CD.)

FluxCD

Flux is described as a GitOps operator for Kubernetes that synchronises the state of manifests in a Git repository to what is running in a cluster. Among the three tools in this evaluation, it is by far the simplest one. In fact, it’s amazing to see how a GitOps workflow can be set up with only a few steps.

This tool runs in the cluster, to which updates would be applied, and its main function is watching a remote Git repository to apply changes in Kubernetes manifests. It was initially developed by Weaveworks and now it’s a Cloud Native Computing Foundation project under Apache 2.0 license on Github.

With exclusive focus on the deployment part of the software delivery cycle, working specifically on the synchronisation of Git repositories—and container registries—with the version and state of workloads in a cluster, the tool is easy to install and maintain.

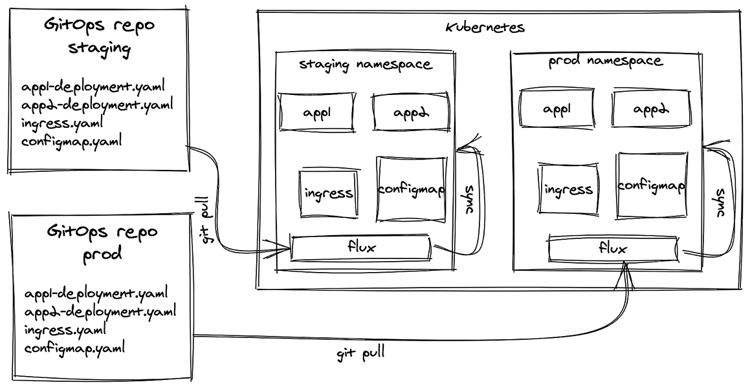

It can watch one single remote repository per installation and it will be able to apply changes only in the namespaces in which its underlying service account has permissions to change. While limiting at a first glance, more elaborate scenarios with multiple teams and their own Git repositories and target deployment environments can all be set up with the according number of Flux instances, each one with its own specific RBAC permissions. (If viewing the following graphic on a mobile device, you can download a PDF here.)

Installation

Flux installation is as easy as deploying any other typical pod in a cluster. It officially supports installations based on Helm Charts and Kustomize. There’s also `fluxctl`, a command line interface (CLI) tool, released as a binary, to help with the installation process and to interact with a running Flux daemon in the cluster to perform some management tasks.

The simplest way to install Flux in a cluster is by applying a small set of manifests that can be generated using the `fluxctl install` command with the appropriate arguments, including the URL of the Git repository that will be watched.

GitOps deployments

As the main feature of Flux, it periodically pulls a remote Git repository and applies its manifest files to the cluster if there are new changes, in a true GitOps fashion. This can be used to deploy applications but also to maintain any sort of cluster configurations in the form of Kubernetes manifests. The synchronisation can also be triggered manually with the `fluxctl sync` command.

Management

Considering Flux is practically a single binary deployed in a Pod, from a cluster administrator point of view, there’s very little to be managed after the installation. Changes in configurations or upgrades to newer versions can be done by reapplying the same manifests with new values, or just using Kustomize or Helm if they were used for the installation.

Besides the installation, the `fluxctl` CLI can help with a few other tasks, like triggering a synchronisation right away or listing the related configurations and workloads deployed in the cluster.

Multi-tenancy

With very few assumptions about the structure of the remote Git repositories and target namespaces, Flux doesn’t have a multi-tenancy mode.

Being a simple component watching a repository and applying its changes, it’s quite trivial to use different instances of Flux in the same cluster, each one watching a different remote Git repository and synchronising their changes in different target namespaces. This possibility would allow separate teams to have their own Git repositories.

With that said, it’s up to the teams to choose the layout of the repositories and how to map applications and target environments represented by namespaces in the cluster. To provide isolation between teams, different repositories could be used, and therefore different Flux instances to watch each of them.

While having more than one Flux pod running in the cluster may add some management overhead, it would allow teams to manage their own environment repositories with proper permissions to commit changes and to approve pull requests. Also, it would enable some degree of isolation between the namespaces, with more specific RBAC settings for each of the service accounts used by the different Flux instances in the cluster.

Multi-cluster

For the same reason—simplicity—Flux could be used in a multi-cluster scenario. Different clusters would have their own instances of Flux watching changes in remote repositories. Given the possibility of specifying a directory in a repo to be watched, teams have the same flexibility to choose the best repository layout for a multi-cluster case. One single repository could be watched by two or more Flux instances from different clusters, each one watching a particular directory, or separate repositories could be used.

Additional features

Auto deploy new versions of container images

Flux can optionally update workloads in the cluster when new versions of their container images become available. If enabled, either running `fluxctl automate` or adding an annotation to the deployment manifest of the workload, it polls the registry for image metadata and if a new version of the used image is available, it can update the deployment with the new image version. When doing so, Flux will write a commit back to the original Git repository to update the image version used in the manifest, so Git still remains the source of truth in what is running in the cluster.

Re-apply drifted resources

Regardless of new changes coming from the Git repository, Flux synchronises the state of the workloads re-applying its manifests within an interval. This is useful when the state of applications and configuration are changed from outside of the GitOps workflow.

Garbage collection

When resources get deleted from the environment repository, Flux can delete them from the cluster. This potentially dangerous operation requires tracking which resources have been created by the GitOps workflow.

When having this feature enabled, Flux will, based on the source (combination of repository URL, branch, and path specified during the installation), give a specific label to all the resources deployed in the cluster during the synchronisation loop. When it comes to the garbage collection loop, Flux will query the API server to retrieve all objects marked as being from the source and will delete those that were not synchronised during the previous phase.

Limitations

The biggest limitation of Flux is, probably by design, also its biggest advantage. It supports only one single repository. This feature makes it a quite simple tool, easy to understand and troubleshoot. Given it runs on a lightweight deployment, this limitation can be overcome with more than one instance in the cluster, as described in the previous ‘multi-tenancy’ section.

Summary: Should I use FluxCD?

Flux, by design, is focused exclusively on the deployment of manifests to a cluster. So you still need to have your CI tools to build and test your applications and, at the end, push your container images to a registry. On the other hand, the CI tools don’t need to access the cluster, since Flux pulls changes periodically from the inside, minimising the cluster’s exposure.

From the team organisation perspective, there is no built-in multi-tenancy support. Teams should agree on how to organise a single Git repository if a single Flux daemon will be used. With that said, If you are looking for a solution to automate your Kubernetes deployments with a simple and lightweight tool, Flux is a great option.

ArgoCD

ArgoCD is a declarative, GitOps-based Continuous Delivery (CD) tool for Kubernetes. It focuses on the management of application deployments, with an outstanding feature set covering several synchronisation options, user-access controls, status checks, and more. It has been developed since 2018 by Intuit and is open source on Github under an Apache 2.0 licence.

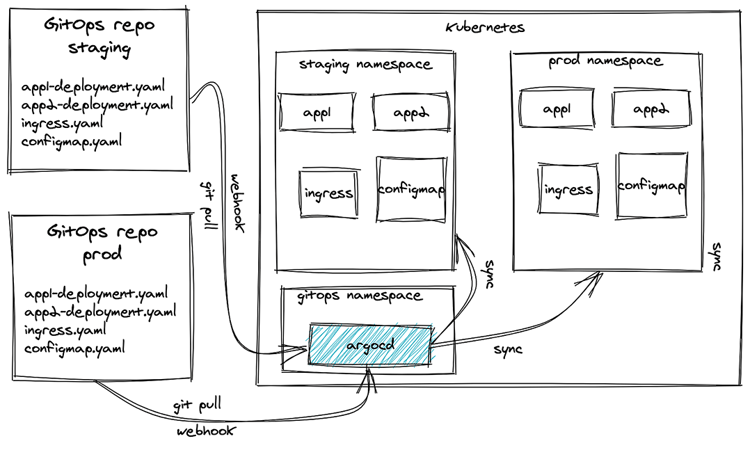

A deployment starts with changes in application manifests in Git repositories tracked by ArgoCD, similar to how Flux works. However, what makes it shine is the capability to manage multi-tenant and multi-cluster deployments with fine controls. It can synchronise multiple applications of different teams to one or more Kubernetes clusters. Besides, it has a nice modern web UI, where users can see the status of their application deployments and administrators can manage projects and user access. (If viewing the following graphic on a mobile device, you can download a PDF here.)

Installation and management

ArgoCD is installed and managed completely in a Kubernetes native way. It runs on Kubernetes in its own namespace and all configuration is stored in ConfigMaps, Secrets, and Custom Resources. This makes it possible to use a GitOps workflow for managing ArgoCD itself.

Supported manifest formats

ArgoCD sports the widest support of different formats in your GitOps repository. Based on the documentation it can handle:

- Kustomize applications

- Helm charts

- Ksonnet applications

- A directory of YAML/JSON manifests, including Jsonnet

- Any custom config management tool configured as a config management plugin

Features

ArgoCD really shines when it comes to important features like multi-tenancy, but also has a myriad of customization options.

Multi-tenancy

A single instance of ArgoCD can handle many applications of different teams. It does this using the concept of Projects. Project can hold multiple applications and are mapped to a Team. Members of a Team will only see the Projects assigned to them, and by extension only the applications which are in those Projects. This model is very similar to Resources in namespaces in Kubernetes.

Multi-cluster

ArgoCD can sync applications on the Kubernetes cluster it is running on and can also manage external clusters. It can be configured to only have access to a restricted set of namespaces.

Credentials to the other clusters’ API Servers are stored as secrets in ArgoCD’s namespace. This can be a very useful feature for managing all your deployments from a single place, as long as you’re OK with exposing the other clusters’ APIs remotely. The built-in RBAC mechanism gives options to control access to deployments to different environments only to certain users.

Configuration drift detection

Kubernetes resources can drift away from the configuration stored in Git when an operator of the cluster changes resources without going through the GitOps workflow (i.e, committing to Git). This is a problem in GitOps, as it’s very easy to make these changes and make the state in the Git repo out of date. Similarly to Flux, ArgoCD can detect these changes and revert them, bringing the state back to what is defined in Git.

Garbage collection

Deleting resources is another issue with GitOps. When a resource such as a deployment is removed from Git, `kubectl apply` will ignore it (unless using the experimental `--prune` flag). This makes developers unable to delete resources they created as their interface to Kubernetes is the Git repo. Helm does solve this problem, but so does ArgoCD. It can remove obsolete resources during its own sync process, so you don’t have to use Helm for just this feature.

Summary: Should I use ArgoCD?

ArgoCD feels like the big brother of Flux. It’s a feature-rich tool with a flawless integration to Kubernetes. We really liked the fact that, even though it has a ton of features, its scope is very clear: it synchronises manifests from the Git repositories to the clusters.

Same as Flux, ArgoCD will not help you to test your applications or build Docker images. You can keep using your existing CI tooling for that. However, ArgoCD will need access to external clusters if you want to deploy to other target clusters.

Multi-tenancy can be very handy in any larger company. When you don’t have it, you will have to roll it yourself in some shape or form, so ArgoCD’s first-class support is very welcome. If you want to manage deployments from multiple applications with fine control over users’ access and manifest synchronisation settings, ArgoCD looks like a good option.

Jenkins X

Jenkins X is a full CI/CD solution built around GitOps and using Tekton under the hood. At first, judging by the name, we would expect to see the next version of the Jenkins we all know, with its jobs and plugins. In fact, Jenkins X has taken a different direction and has very little in common with the classic Jenkins.

It has left the master-worker nodes architecture from Jenkins behind, and it is built as a Kubernetes-native CI/CD engine. It is open source on Github under Apache 2.0 license and is developed by Cloudbees, which also offers a commercial distribution.

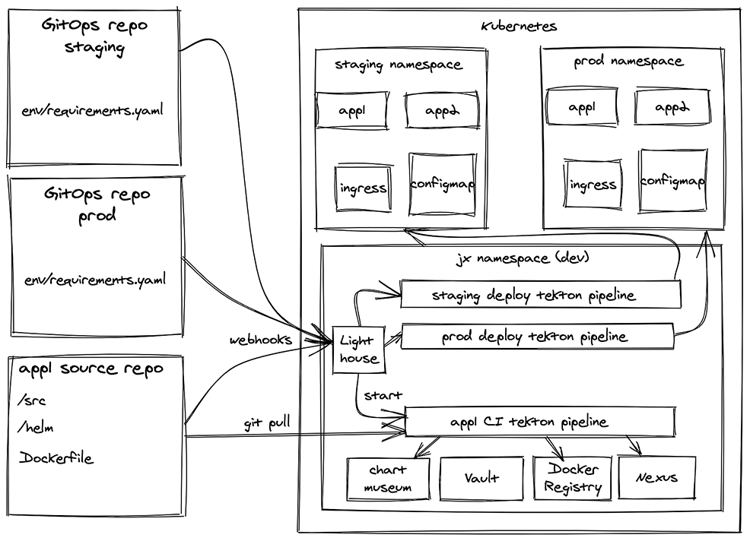

It’s important to note that, besides the GitOps-based deployment capabilities, Jenkins X also covers a wider spectrum of the development cycle, including the build-and-test phases from a typical CI pipeline, and build and storage of container images. To achieve this, an impressive set of Cloud Native projects is bundled and configured together, enabling a modern development workflow.

Jenkins X will setup Skaffold and Kaniko to build container images, and Helm charts to package Kubernetes manifests. Internally, it uses Tekton to run pipelines, and Lighthouse to integrate with your chosen Git provider (e.g. GitHub) and enable rich interactions in pull request threads. (If viewing the following graphic on a mobile device, you can download a PDF here.)

Installation

The installation must be done with the `jx boot` command and it assumes a user with wide permissions to a Kubernetes cluster, enough to create namespaces, CRDs, set service accounts, and create GCP resources like Cloud Storage buckets.

At the beginning of the process, the boot configuration repository will be cloned and the user is prompted to accept (or change) some default options and to provide credentials of a GitHub user that will be used as a bot in PR threads.

By default, three Git repositories are provisioned in GitHub to keep the state of the environments in the cluster: dev, staging, and production. The dev environment (cloned from the boot configurations repository) is where the tooling runs. Staging and production are the ones where the user applications will run when deployed. Each environment is represented by its own namespace in the cluster and its own Git repository.

As we evaluate Jenkins X, there is an ongoing discussion to enable the boot installation process to use Helm 3 and helmfile.

GitOps deployments

Given a successful application build after a merge to master in the application repository, a new release version of the application Helm chart is created. To deploy this new version in one of the environments, the corresponding GitOps repository needs to be updated. For example, to deploy to production, the Git repository representing the production environment needs to be updated.

Following the GitOps principles, such a change in the environment repository would trigger a deployment pipeline using Tekton to synchronise what is described in the repository to what is running in the cluster. The deployment process can also be initiated by the jx promote command, which will update the environment repository and therefore trigger the synchronisation.

Management

After the installation, some of the internal configurations can be tweaked by updating the config files in the dev environment repository. When the change is committed and pushed, a pipeline will be triggered in which Jenkins X will read the configurations and update itself. Thus Jenkins X itself is managed by a GitOps workflow.

Multi-tenancy

Considering there is no particular isolation between applications from different teams in a Jenkins X cluster, multi-tenancy is not supported. Users and applications would all share the same internal resources and components, and the same set of environments. Although there is the concept of teams, supported by jx team sub commands, it’s a half-baked solution basically copy-pasting the whole Jenkins X deployment.

Multi-cluster

The application environments, like staging and production, could be running in other clusters (described in the docs). This is a new feature in Jenkins X and has been neglected for a bit too long. The effect of this neglect is that there are now different solutions with different downsides and it looks like we have to wait a bit longer for a final blessed solution.

Features

Jenkins X comes with a large set of unique features making the developer experience much more smooth and complete than the other two tools.

Quickstart for new projects

For new projects, the `jx quickstart` command can help with the creation of a new Git repository containing an application skeleton with a Helm chart, and a predefined build pipeline using Skaffold to build Docker images. It also provisions the repository in GitHub, setting up a webhook to trigger builds and other actions after new commits in branches and pull requests. Existing projects can be set up with the `jx import` command, which will help with the creation of a Dockerfile and Helm chart, if not present yet, and set the appropriate webhooks.

Provisioning of Git repositories

Using the provided GitHub user credentials, during the installation the environment repositories (dev, staging, and production) are created in GitHub in a selected organisation or as user repositories. Also for new quickstart projects mentioned above, a repository is provisioned with the application skeleton.

Build pipelines

Jenkins X supports pipelines of tasks to be triggered after events from an application repository. For instance, building the app and running tests before for a new branch or pull request. There are default build pipelines for different programming languages and frameworks in what is called build packs. Applications should have a `jenkins-x.yaml` file pointing to their parent build packs, and pipelines can be customised.

ChatOps

ChatOps is the term used for development and operations activities managed in a chat thread, usually with the support of a bot user performing some automatic or requested actions. In Jenkins X, new pull requests (PR) created in an application or environment repository would trigger a pipeline run. The results of the pipeline steps are posted in the PR thread by the bot, the GitHub user configured during installation. Users can interact with the bot requesting additional tasks, like re-run tests, or approve and merge PRs.

To integrate webhooks, triggers and events, Jenkins X internally uses Prow, originally developed to run builds for Kubernetes projects in GitHub. Alternatively, Lighthouse is being developed by the Jenkins X team to provide similar capabilities but extending the support for different Git providers like BitBucket and GitLab.

Preview environments

After a successful build, for instance in a newly created pull request in an application repository configured by Jenkins X, a temporary preview deployment for the current build is created. With this preview, it’s possible to manually check the current changes being submitted as a PR— for instance, opening the web API application in the browser—before merging it to master.

Limitations

While the other two tools have a far smaller scope than Jenkins X, they deliver perfectly on what they say they do. Jenkins X on the other hand is a complex all-encompassing solution, which sets different expectations. The most notable missing feature is multi-tenancy. When Jenkins X gets installed on a cluster we would expect it to be able to serve all teams. Unfortunately Jenkins X’s multi-tenancy model can be best compared to Flux’s, but while the latter is a simple tool for a simple job, Jenkins X installs a plethora of components, which I certainly don’t want to duplicate for each team.

Summary: Should I use Jenkins X?

Jenkins X is an ambitious project that covers a wide spectrum of application development, putting together CI and CD in a single package. This is a great capability out of the box, making additional CI tools unnecessary. Existing projects, however, may have to adapt to a new tool chain to take advantage of tasks (builds, tests, and other jobs) being triggered after pushes to branches and pull requests.

The project is in active development, and it’s quite impressive how fast things are evolving. We were pleased to see improvements in the jx CLI usability, better status and project overviews in the web UI, and the addition of new features in such short periods of time. On the other hand, given the wide scope of capabilities, it may take some time to understand all the concepts and to become familiar to its internal components in case some default options need to be tweaked.

If you want a modern development and deployment workflow based on Git with rich ChatOps interactions in pull requests, just like Kubernetes and other open-source projects in GitHub have, without having to put together a whole set of independent tools and configuration by yourself, Jenkins X is definitely a great option. This kind of uber-tool is much needed in the Cloud Native space, as not every company has the time and resources to do the Ikea assembly work from a whole universe of different tools.

The biggest challenge in adoption of Jenkins X is that it’s hard to know if it’s the perfect solution solving all of a company’s CI/CD requirements or an overly complex beast, which will deliver some quick wins initially, but become a hurdle down the line. The only way out of this conundrum is for Jenkins X to be so feature complete that any company can adopt it knowing it will be the right tool down the line. See the limitations section on why this might now be the case today.

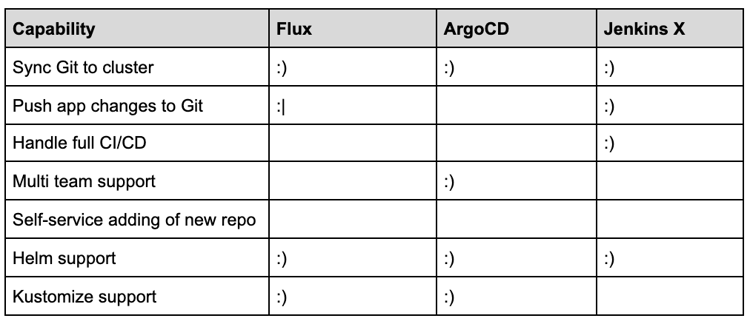

Conclusion

We believe all the three projects, Flux, ArgoCD, and Jenkins X, have very interesting and powerful capabilities to manage deployments in Kubernetes, but each come with pros and cons that should be evaluated for your specific case. These tools aren’t really competing with each other, rather they are aiming to fulfill different use-cases.

Flux is a small and lightweight component to enable GitOps-based deployments and probably will complement your current setup. It just needs access to one (and only one) Git repository and it can run with limited RBAC permissions.

ArgoCD can manage deployments for multiple applications in different clusters. It runs with cluster-wide permissions in the cluster but also manages access and permissions for teams and projects. It has a slick web interface to manage applications and projects, and offers a nice set of features to manage deployments.

Jenkins X bundles together an opinionated selection of tools to build a development workflow around repositories in GitHub (other providers will be supported soon), just as you would expect in modern open-source projects. It runs CI pipelines to build and run tests for applications, offers rich interactions in pull requests, and manages deployments based on changes in Git repositories.

Photo by Mihai Surdu on Unsplash

Enjoy our content so far? Please make sure to subscribe to our WTF is Cloud Native newsletter:

Previous article

Previous article